Quantum computers manipulate qubits through operations called gates, and the Hadamard is one of the most important. It is often the first operation in a quantum algorithm, and understanding it unlocks much of what follows in this book.

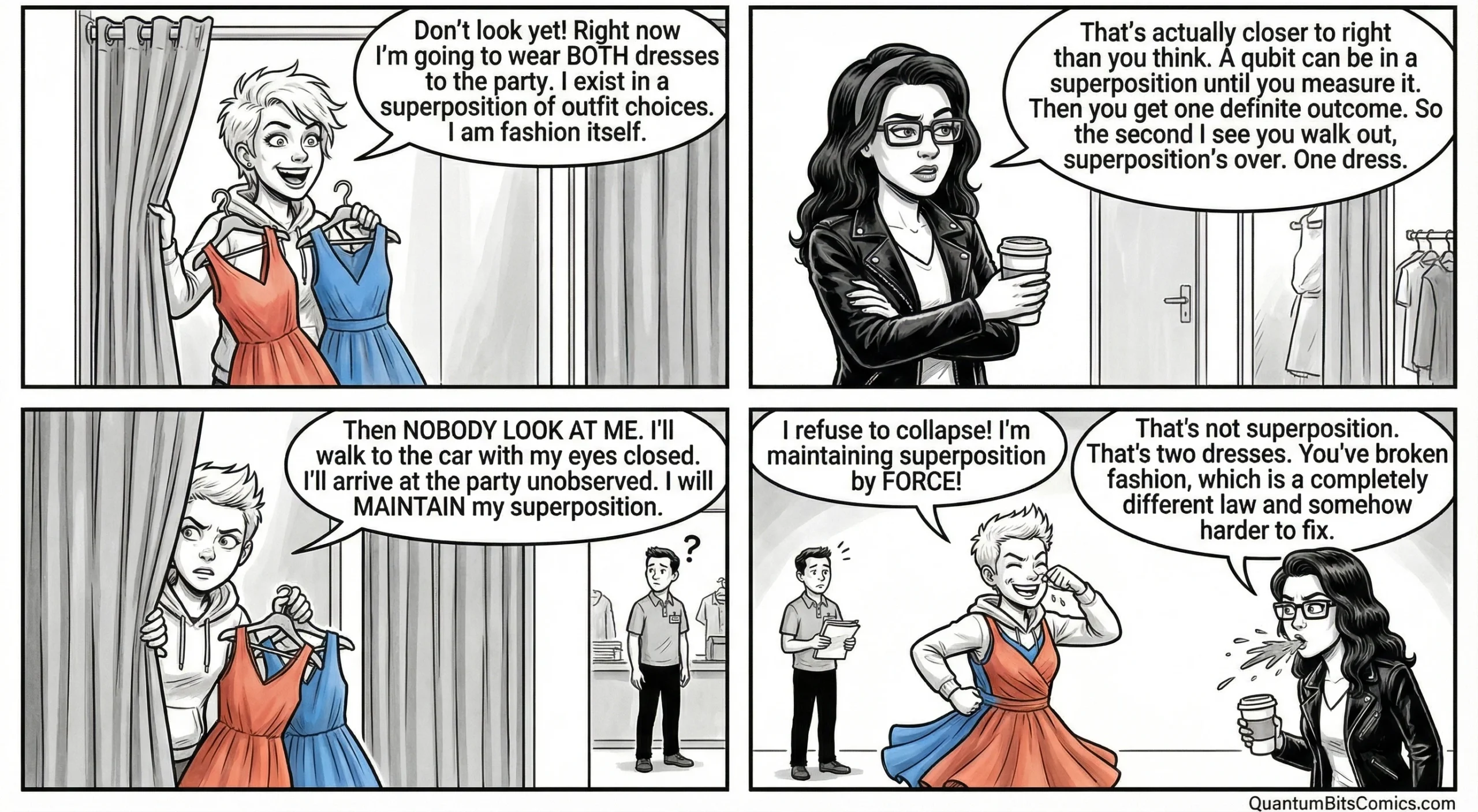

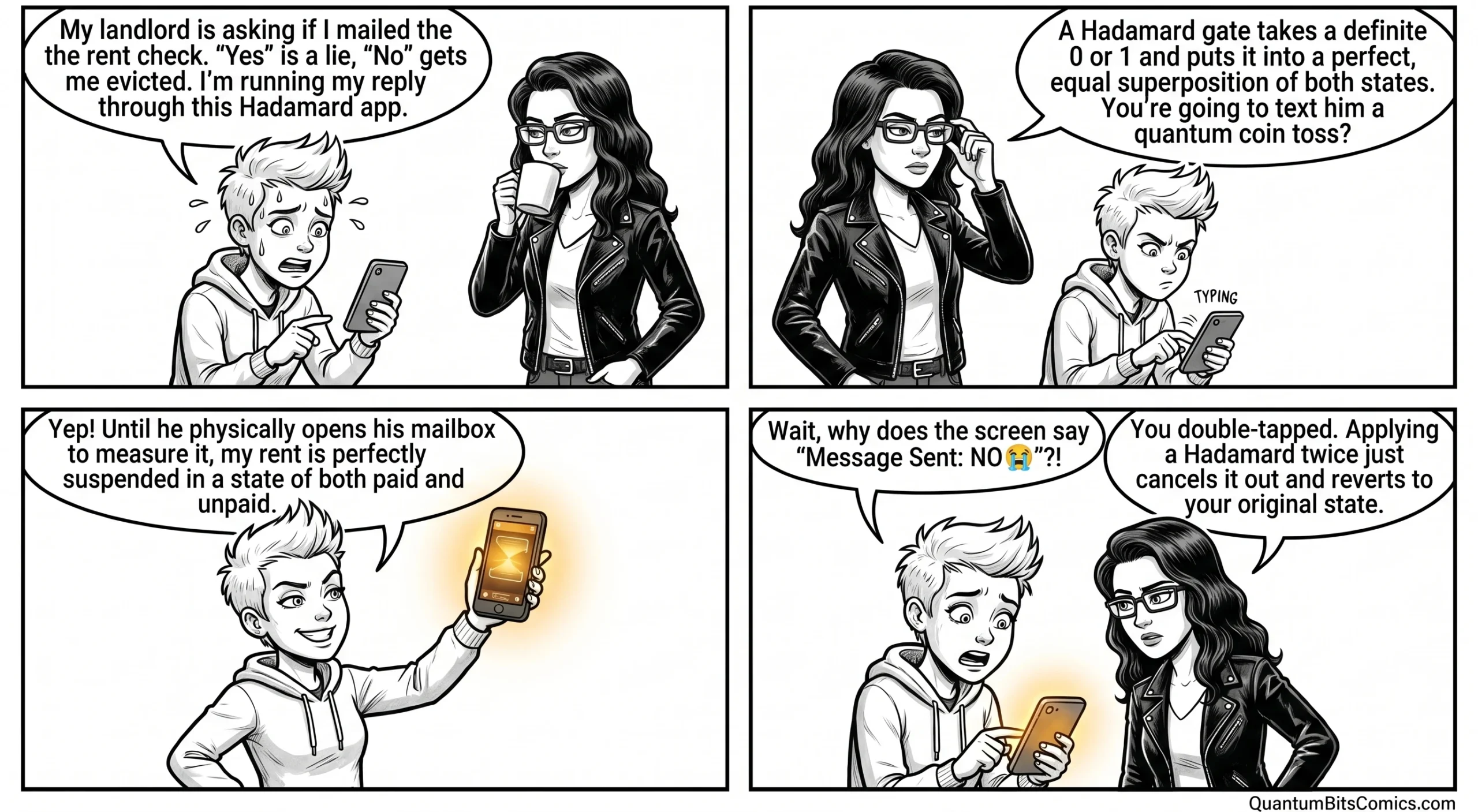

Here is what it does: it takes a qubit in a definite state, say 0, and transforms it into an equal superposition of 0 and 1. Mathematically, the qubit’s amplitude is split evenly between both possibilities. If you immediately measure the qubit after applying a Hadamard gate, you get 0 or 1 with exactly 50-50 odds.

Why is this useful? Because superposition is the raw material that quantum algorithms need. Before a quantum computer can exploit interference to amplify correct answers and suppress wrong ones, it must first create superpositions to work with. The Hadamard gate is the standard tool for that job.

One elegant property: applying a Hadamard gate twice returns the qubit to its original state. The first application creates superposition; the second undoes it. This reversibility is characteristic of quantum gates in general and reflects a deep principle: quantum operations (other than measurement) are always reversible.

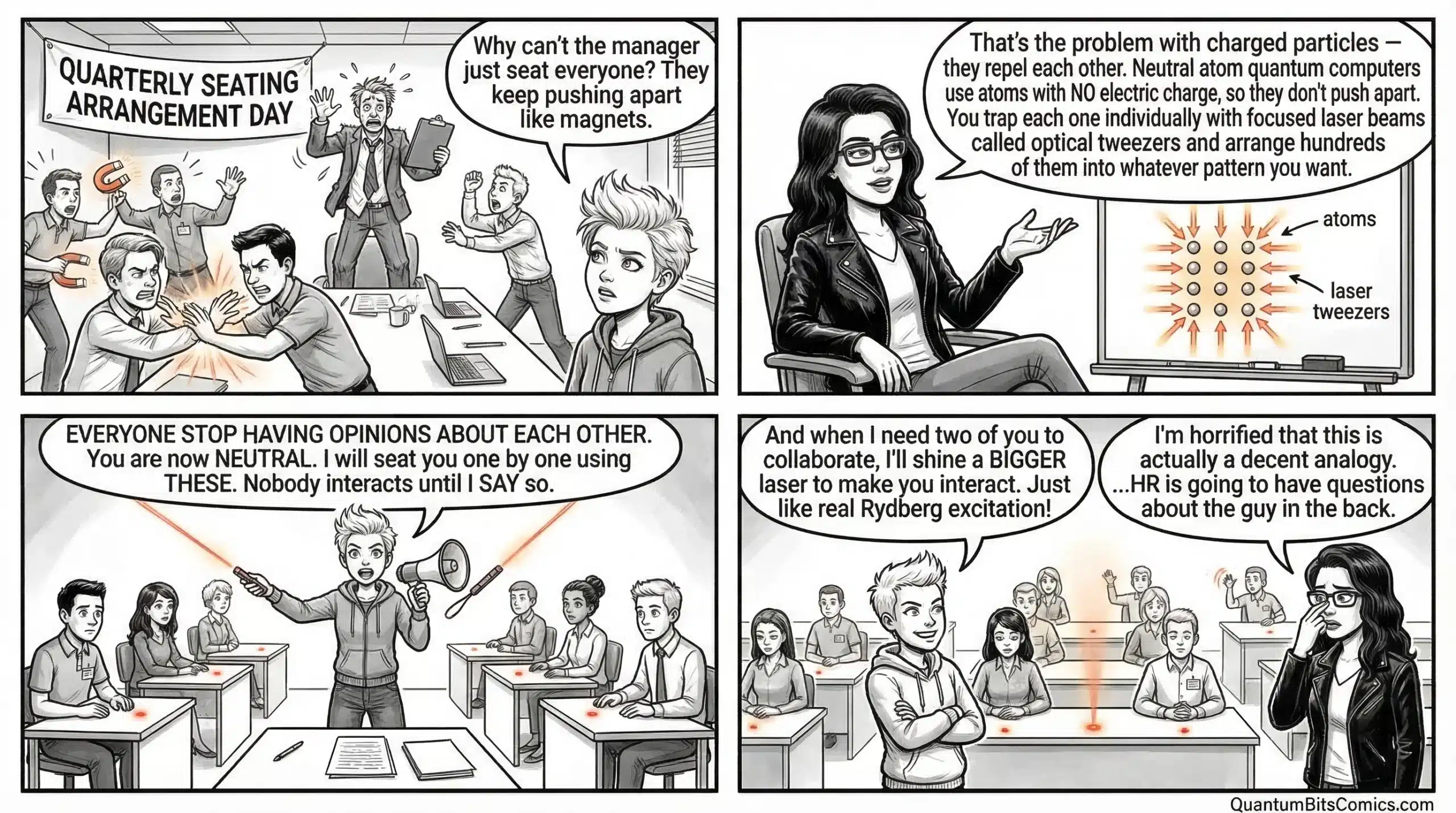

How the gate is physically implemented depends on the hardware. In superconducting systems, it is a precisely calibrated microwave pulse applied to a tiny circuit. In trapped-ion computers, it is a laser pulse tuned to a specific atomic transition. In neutral-atom systems like those built by QuEra, it is similarly a laser pulse, but applied to atoms held in optical tweezers. The physics differs, but the mathematical effect is identical across all platforms.

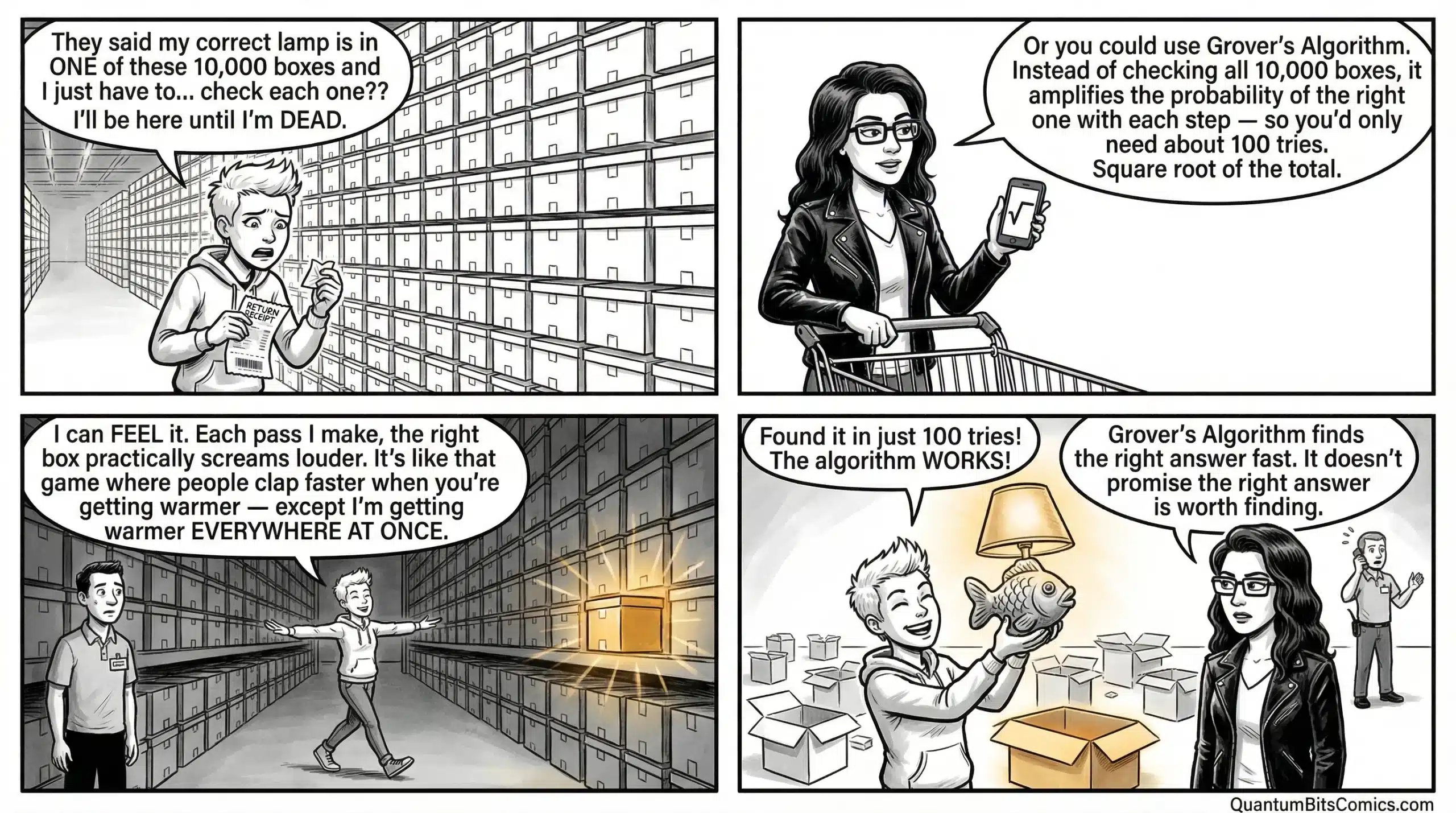

When you apply Hadamard gates to many qubits at once, you create a superposition over all possible combinations of 0s and 1s. This is the starting configuration for algorithms like Grover’s search and the Deutsch-Jozsa algorithm, where the computation begins by exploring all possibilities equally before interference narrows the outcomes.

Subscribe on Substack at https://qubitguy.substack.com/